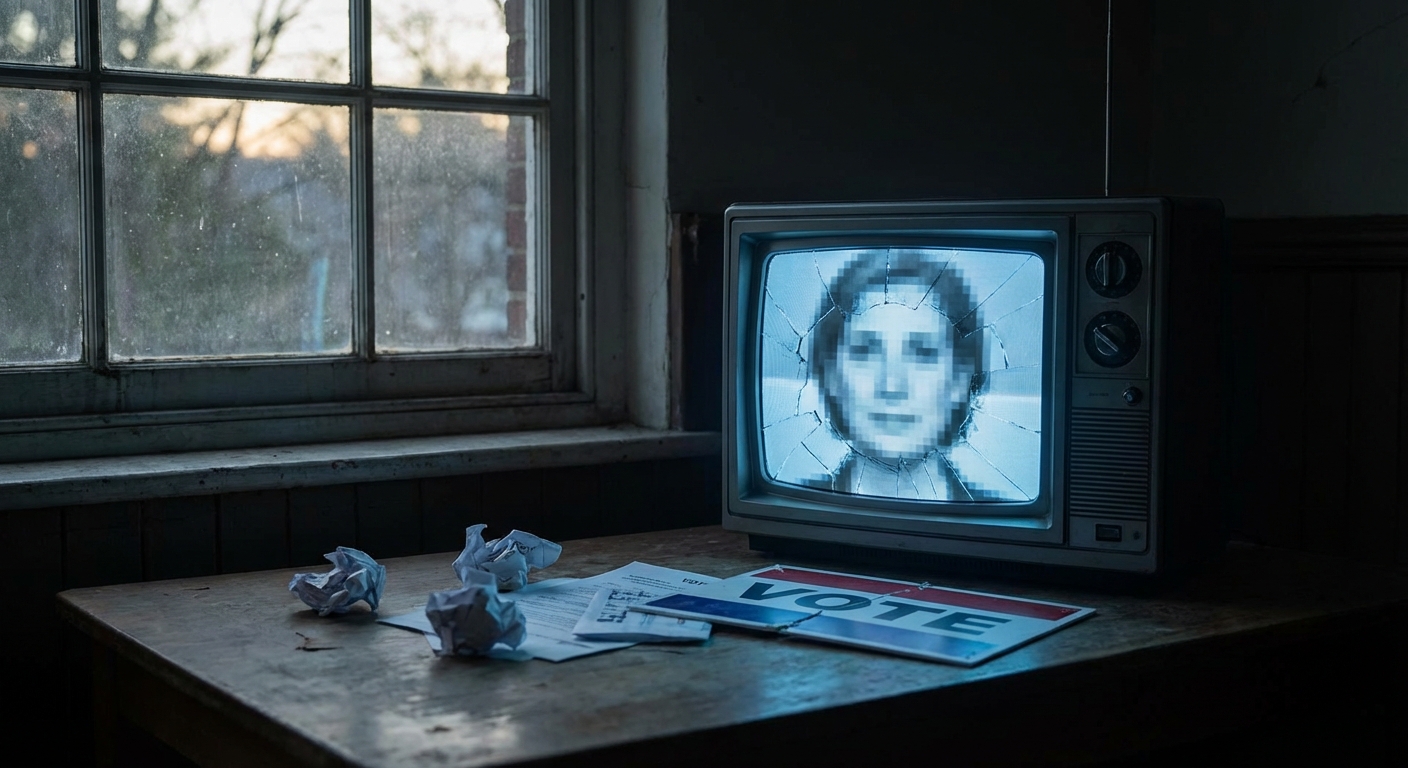

7 Terrifying Ways AI Deepfakes Will Destroy Election Integrity in the Next 24 Hours

Democracy is no longer a battle of ideas. It’s a battle of bits.

The next 24 hours are the most dangerous in the history of the modern republic. We are entering the "Truth Decay" window—a period where the time it takes to debunk a lie is longer than the time it takes for that lie to flip an election.

Forget what you know about Photoshop. Forget "uncanny valley" CGI.

The barrier to entry is gone. The cost is near zero. The speed is instantaneous.

1. The "Zero-Day" Audio Leak

The most effective weapon won't be a video. It will be a grainy, low-quality audio file.

At 11:00 PM tonight, a recording will "leak" on X. It will sound like a candidate behind closed doors. They will be using a slur, discussing a bribe, or admitting to a crime.

This is the "Hot Mic" Deepfake.

With tools like ElevenLabs or RVC (Retrieval-based Voice Conversion), I can clone any politician’s voice with 99.9% accuracy using only 30 seconds of public speech data.

By the time forensic experts verify the audio is synthetic, the polls will have been open for six hours. The damage is permanent.

2. Hyper-Local Robocall Suppression

In 2020, you got a text. In 2024, you’ll get a call from your mother.

Or at least, it will sound like her.

Using automated API calls, thousands of voters will receive personalized voice messages in the middle of the night.

"The polling location at the library has been moved due to a gas leak." "If you haven't voted by 10 AM, your registration is flagged for audit."

It’s not a mass broadcast. It’s a surgical strike. It doesn't need to flip a million votes. It only needs to confuse 500 people in three key counties.

3. The "Health Scare" Visual Mirage

Tomorrow morning, a 10-second clip will go viral on TikTok. It will show a candidate collapsing on stage, slurring their words, or suffering a visible neurological event during a private walkthrough.

It won't be a high-production movie. It will be "Vertical Video"—shaky, blurry, and filmed from a distance.

The goal isn't to prove the candidate is unfit. The goal is to trigger an immediate, visceral "What if?" in the minds of undecided voters. Once that seed is planted, no "Official Statement" can pull the roots out.

4. The Language-Bridge Psyop

Most fact-checking resources are focused on English-language mainstream media. The real war is happening in the "Dark Social" of immigrant communities.

WhatsApp groups. Telegram channels. WeChat.

Deepfakes are now being generated in dozens of dialects—perfectly translated, perfectly lip-synched.

A candidate might appear in a video speaking fluent Spanish, Mandarin, or Vietnamese, saying something completely different than their English platform. Or, worse, a fake video of an opponent disparaging a specific ethnic group will circulate in these closed loops.

Because these videos live in encrypted chats, the campaigns don't even know they exist until the votes are counted. It’s an invisible landslide.

5. The Ballot Box Sabotage Hoax

Expect "POV" footage from a poll worker’s perspective.

The video will show suitcases of ballots being "shredded" or machines being "reprogrammed" with a USB stick.

It doesn't matter if the video is fake. It doesn't even matter if it’s debunked in an hour. The footage will be the "proof" needed to incite real-world protests at the polls.

6. The "Sleeper Cell" Endorsement

Imagine a video of a retired, highly-respected General or a former President from the opposing party suddenly switching sides at the 11th hour.

"I’ve seen the classified data," they’ll say. "I cannot stay silent any longer."

This is the "Moral Permission" deepfake. It targets the "soft" voters who were looking for an excuse to defect but felt social pressure to stay the course.

A 60-second clip of a trusted figure giving them "permission" to flip is all it takes to shift the margin of error.

7. The Liar’s Dividend

A candidate is caught on a real hot mic? "AI-generated." A candidate is filmed at a secret donor meeting? "Digital fabrication."

When everything can be fake, nothing has to be true. This is the death of accountability. We are moving into a post-truth era where the winner isn't the one with the best policy, but the one with the best "Denial Infrastructure."

The Prediction

Within the next 24 hours, at least one "High-Impact Synthetic Event" (HISE) will occur in a swing state.

It won't come from a government. It will come from a bored 19-year-old with a $50-a-month subscription to a GPU farm and a grudge.

The disruption isn't coming. It’s already here. It’s just waiting for the clock to hit midnight.

The era of "seeing is believing" is officially over. We are now in the era of "believing is seeing."

How will you know what's real tomorrow?