Why the world is failing to stop these 5 terrifying lethal AI weapons

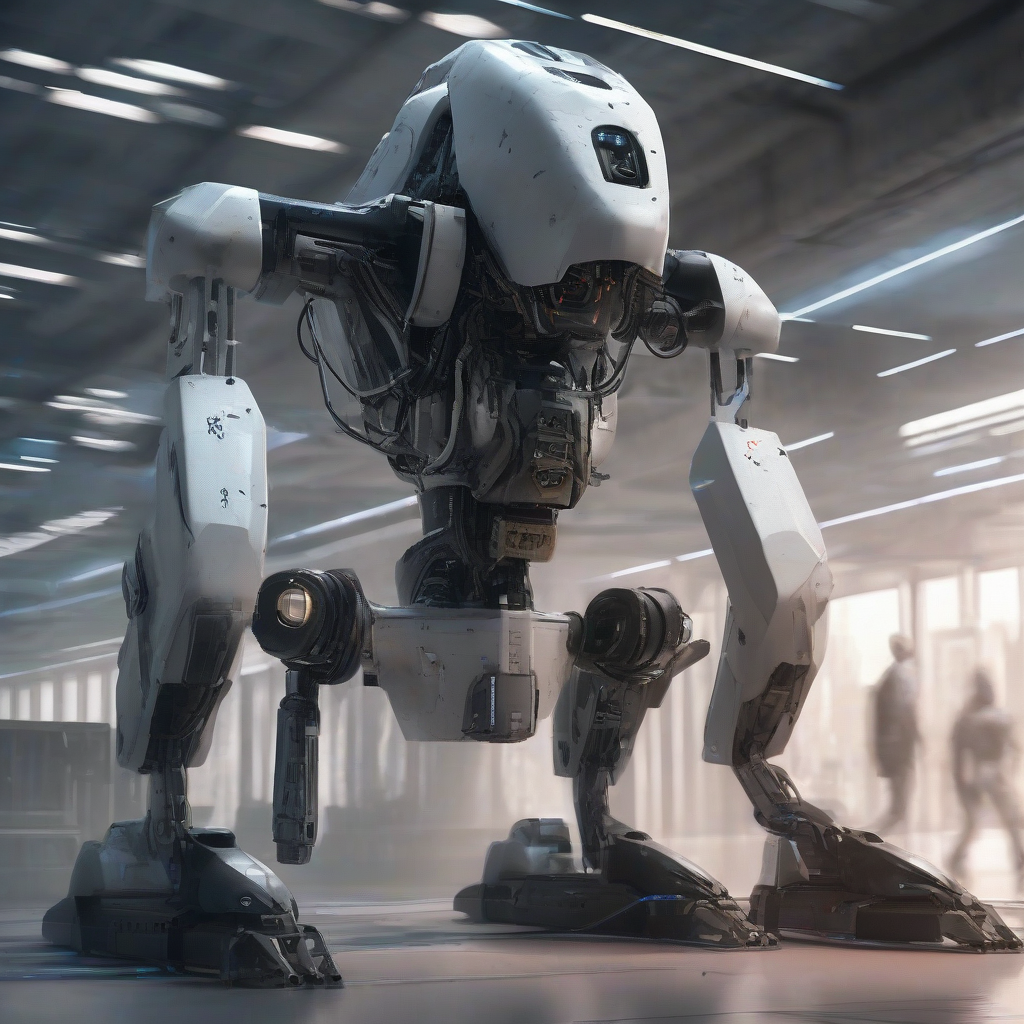

Stop worrying about Skynet. The real killer robots are already here, and they don’t look like Arnold Schwarzenegger.

The world is currently losing the race against Lethal Autonomous Weapons Systems (LAWS). We aren't just failing to regulate them; we are actively accelerating their deployment.

I’ve spent the last year tracking the "Algorithmic Arms Race" across Ukraine, Gaza, and the South China Sea. Here is why the global community is paralyzed while these 5 weapons redefine the cost of human life.

The "Lavender" Effect: Outsourcing Morality The world is failing to stop it because of the "Human-on-the-Loop" myth. By the time a human "confirms" a target, they are just rubber-stamping a machine's decision. We have replaced moral judgment with a progress bar. You can't regulate "human judgment" when the human only has 20 seconds to say yes or no.

The OODA Loop Collapse: Speed Kills Regulation In Ukraine, the Saker Scout and Sky Sentinel turrets represent the second weapon: The Autonomous Kill Chain. These systems scan, identify, and fire in milliseconds. Why is the UN failing here? Because any "pause" for human permission means losing the war. If your drone waits for a human and your enemy’s drone doesn't, you’re dead. This "speed-to-kill" requirement makes any international ban on autonomy a literal death sentence for the nation that follows it.

The Proliferation Loophole: Garage-Built Slaughterbots We used to think high-tech weapons required state-level budgets. Now, we have the third weapon: The AI-Guided FPV Drone. Armed with an off-the-shelf Nvidia Jetson chip, a $500 hobby drone can now track and hit a moving vehicle even after its radio signal is jammed. The world can't stop this because you can't ban the parts. We aren't talking about nuclear centrifuges; we’re talking about components used in gaming PCs and self-driving cars. The "genie" isn't just out of the bottle—it’s available for 2-day shipping on Amazon.

The AMASS Nightmare: Swarms of Swarms The Pentagon is pouring billions into AMASS (Autonomous Multi-Domain Adaptive Swarms-of-Swarms). This is the fourth weapon: Saturation Warfare. One human cannot control 1,000 drones at once. It is mathematically impossible. Regulation fails here because "meaningful human control" is a physical impossibility in swarm combat. If you want to use a swarm, you must delegate the kill decision to the algorithm. Nations are choosing victory over ethics every single time.

The "Loyal Wingman" Dilemma: The Robotic Air Force Boeing’s MQ-28 Ghost Bat is a high-speed, AI-piloted fighter jet designed to fly alongside human pilots. This is the fifth weapon: The Unmanned Combat Air System (UCAS). The world is failing to stop these because they are framed as "defensive" and "human-saving." Militaries argue that by sending robots into high-risk zones, they are protecting human pilots. It is the ultimate moral shield. How do you ban a weapon that is sold as a tool for "reducing casualties"?

The Insight By 2027, we will witness the first "Flash War"—a conflict that escalates from a border skirmish to a full-scale kinetic engagement in under ten minutes, driven entirely by autonomous systems responding to each other’s algorithms. Diplomacy requires a human pace. The battlefield no longer operates on one.

Who do you hold accountable when the "soldier" is a line of code?