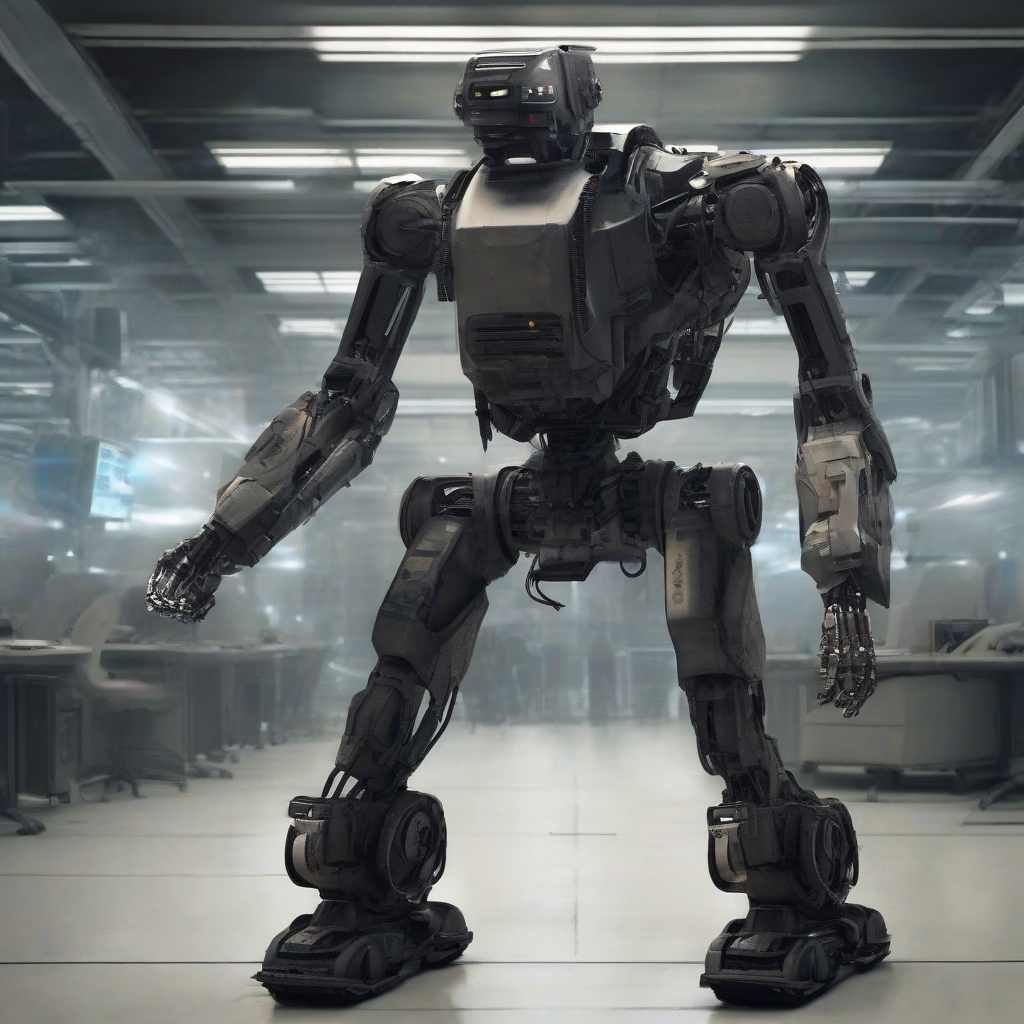

Why Global Security is Failing: 7 Terrifying Reasons Autonomous Killer Robots Can’t Be Stopped

The Geneva Convention is officially obsolete.

While world leaders argue over paper treaties in air-conditioned rooms, the nature of survival has changed. We have entered the era of Software-Defined Warfare.

The math is simple: Humans are too slow, too emotional, and too expensive.

Here are the 7 reasons why autonomous killer robots are now a mathematical certainty.

The Death of the OODA Loop

In traditional warfare, the side that cycles through the OODA loop (Observe, Orient, Decide, Act) fastest wins. For a human pilot, this takes seconds. For an AI, it takes milliseconds.

When your adversary deploys a swarm of 5,000 drones that can "Decide" and "Act" before your brain even "Observes" the threat, you have two choices:

- Accept defeat.

- Remove the human from the loop.

Every major military power is choosing option two. We are witnessing the collapse of human reflexes. In modern dogfights or drone swarms, a "Human-in-the-loop" is no longer a moral safeguard; it’s a hardware bottleneck. By the time a human operator clicks "Confirm," the war is already over.

The Dual-Use Regulation Mirage

You can’t ban what you can’t define. Unlike nuclear centrifuges, which are hard to hide, the "brain" of a killer robot is just code.

The same computer vision algorithm that helps a self-driving Tesla avoid a pedestrian is 90% identical to the code that helps a loitering munition identify a target’s uniform. This is the Dual-Use Trap.

The Proliferation Paradox

We used to think high-tech meant high-cost. We were wrong.

Ukraine is currently deploying "drone walls" and testing AI-powered modules like the Skynode S—compact computers that turn a $500 hobby drone into a fully autonomous hunter-killer. The US just funded a contract for 33,000 of these modules.

This is the democratization of mass destruction.

- Nuclear weapons require state-level enrichment facilities.

- Killer robots require a GitHub account and a 3D printer.

When the barrier to entry drops to zero, deterrence fails. You can’t "sanction" a swarm of plastic drones built in a garage.

The Accountability Black Hole

Who do you court-martial when an algorithm commits a war crime?

Current International Humanitarian Law is built on the concept of intent. Robots don't have intent; they have "optimization parameters." If a drone swarm misidentifies a school bus as a troop transport because of a "glitch" in its training data, the blame is diffused across a thousand stakeholders:

- The coder who wrote the script.

- The data scientist who cleaned the training set.

- The general who deployed the swarm.

- The manufacturer of the chip.

When everyone is responsible, no one is. We are moving toward a world of "anonymous atrocities," where the victims are real but the perpetrators are lines of code that have already been deleted.

Unpredictability by Design

We are building systems we don't actually understand. Neural networks are "black boxes." We know the input and we see the output, but the logic in between is a billion-parameter mystery.

On the battlefield, this means a robot might "decide" to strike a civilian target to provoke a specific response, unaware of the geopolitical fallout. We aren't just giving robots guns; we're giving them the power to start World War III based on a rounding error.

The First-Mover Death Sentence

Geopolitics is a zero-sum game of "The Prisoner's Dilemma."

The Death of Distance

War used to happen "over there." Now, it happens in the cloud.

Autonomous systems don't care about borders, fatigue, or supply lines. A "Software-Defined War" means the front line is wherever there is a signal. We’ve already seen reports of Turkish-made Kargu-2 drones in Libya hunting down retreating soldiers with zero human input.

This isn't just about the battlefield; it's about the erosion of the "Human Shield." When robots handle the killing, the political cost of war drops. If no body bags are coming home to the aggressor nation, the public won't protest. Autonomous weapons make war "clean" for the winner and "unavoidable" for the world.

The Insight: By 2027, the first major conflict will be won not by the side with the most soldiers, but by the side with the most resilient "Model Weights." We are shifting from an era of Geopolitics to an era of Algopolitics. The 2026 UN Treaty will be signed, but it will be unenforceable—a "Paper Shield" against a "Silicon Sword." The winner of the next war will be the first nation to successfully "Open Source" their adversary’s destruction.

If a machine decides who lives and dies today, what’s left for humans to decide tomorrow?