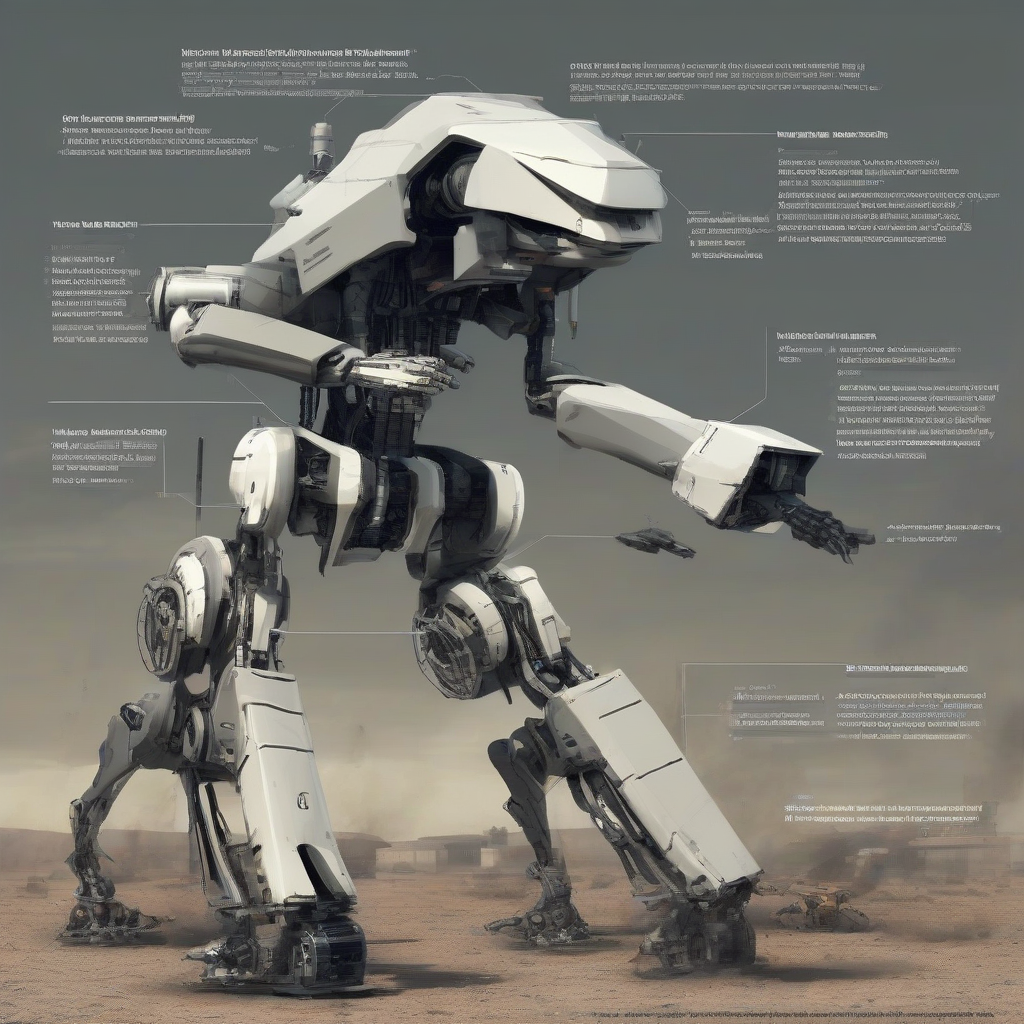

Why the Development of Killer Robots is Failing: 5 Terrifying Truths About Autonomous Weapons

The world is obsessed with the wrong nightmare.

We’re terrified of Skynet gaining consciousness. We’re scared of T-800s walking through walls. We think the "singularity" is the moment the machines decide they don’t need us.

The reality is much worse.

Here is why the "Killer Robot" revolution is a catastrophic glitch in progress.

The Reality Gap: Silicon Valley’s Greatest Lie

Software engineers live in a world of "clean data."

They train models in simulated environments where the lighting is perfect and the physics are consistent. They build self-driving tanks that work beautifully in a sunny California parking lot.

The battlefield is not a parking lot.

The "Reality Gap" is the distance between a simulation and the chaos of the physical world. Right now, that gap is a canyon. When a Tesla hits a phantom barrier on the highway, it’s a tragedy. When an autonomous weapon system (AWS) suffers a "sensor hallucination" in a crowded city, it’s a war crime.

Silicon Valley says they can "iterate" their way out of this. You can’t iterate when the hardware is vaporized. You can’t "A/B test" a massacre. The failure of autonomous weapons starts with the arrogance of thinking the world is a dataset.

The Economic Paradox: Why Cheap Beats "Smart"

The military-industrial complex wants to sell us the F-35 of robots.

They want gold-plated, AI-integrated, titanium-shielded ground units that cost $5 million a piece. They call this "The Future of War."

The actual future of war is happening in Eastern Europe right now. It’s not a $5 million robot. It’s a $500 plastic FPV drone from DJI with a grenade duct-taped to the bottom.

We are entering the era of "Atoms vs. Intelligence."

Why would a general risk a high-tech "Killer Robot" that requires a team of 20 engineers to maintain when they can swarm a target with 1,000 cheap, disposable drones?

The "development" of sophisticated killer robots is failing because the economics don't move the needle. High-end autonomy is too expensive to lose and too glitchy to trust. In a war of attrition, the "smartest" weapon is the one you can afford to lose by the thousands. The military-industrial complex is trying to sell Ferraris to a world that only needs bicycles with engines.

The Black Box Dilemma: Who Goes to Jail?

Commanders hate autonomous weapons.

Not because of ethics. Because of accountability.

In the military, there is always a neck to wring. If a soldier fires on a civilian, the chain of command handles it. There is a court-martial. There is a record.

With a fully autonomous weapon, the decision-making process is a "Black Box."

The general who turned the robot on? The software engineer who wrote the targeting algorithm three years ago? The data scientist who didn't include enough "edge cases" in the training set?

The Attribution Gap and the Death of Deterrence

War relies on knowing who hit you.

Deterrence works because if Country A attacks Country B, Country B knows exactly where to send the retaliatory strike.

Autonomous weapons break this.

If a swarm of "sterile" (unmarked) autonomous drones wipes out a power grid, who did it? Was it a nation-state? A terrorist group? A lone-wolf coder? A glitch in the system that self-triggered?

When you remove the human from the trigger, you remove the "fingerprint" of the attack.

Development is failing because we are realizing that "Killer Robots" make the world less stable, not more. We are creating a world where anyone can start a war, but no one can end one, because no one knows who started it.

The Speed Trap: The Race to Zero-Reaction Time

The goal of autonomous weapons is speed.

The idea is to "out-OODA loop" the enemy. Decisions made at the speed of light. See, orient, decide, act—all in milliseconds.

But here’s the terrifying truth: When two autonomous systems fight each other, the speed of escalation becomes uncontrollable.

Think of "Flash Crashes" in the stock market. Algorithms trading against algorithms, spiraling out of control in seconds, wiping out trillions in value before a human can even blink.

Now apply that to nuclear-armed nations.

We are building a system that removes the one thing that has prevented nuclear Armageddon for 80 years: Human hesitation.

The "fail-safe" is the person who says, "Wait, let’s double-check." Killer robots are being designed to remove that "Wait." That’s not progress. That’s a suicide pact.

The Insight

The era of the "Humanoid Terminator" is a dead end. It’s too expensive, too fragile, and too legally toxic.

The real shift isn't toward "Killer Robots." It's toward "Centaur Warfare."

My prediction: Within 5 years, the push for "Full Autonomy" will be quietly abandoned by major powers. Instead, we will see the rise of "Hyper-Augmentation"—human operators controlling hundreds of semi-autonomous sub-systems via neural interfaces or advanced AR.

The "Killer Robot" won't be a machine that thinks for itself. It will be a human who has been stripped of their empathy by a digital interface, managing a swarm of "dumb" kill-bots like a video game.

The failure of autonomous weapons won't lead to peace. It will lead to a more efficient, more detached, and more frequent form of slaughter.

The robot isn't the problem. The person who wants the robot to do their dirty work is.

If a robot kills by mistake, is it a tragedy or a technicality?